What Regulators Expect to See When AI Is Used

Artificial intelligence (“AI”) is no longer experimental in public company audits. From risk assessment and scoping decisions to population testing, anomaly detection, and documentation support, AI enabled tools are increasingly embedded in audit execution and workflow. As use expands, the auditor’s core obligations do not shift to the technology, they remain with the engagement team. If AI is used to inform judgments, influence the nature, timing, or extent of procedures, or summarize and interpret information, auditors must still demonstrate that they obtained sufficient appropriate audit evidence and applied professional skepticism throughout. In practice, auditors must understand what the tool is doing, confirm that inputs are complete and accurate, and evaluate whether the outputs are reliable and fit for purpose in the specific audit context.

While the auditing standard devoted solely to AI have not been issued, our experience is that inspectors have been increasingly direct—through staff publications, questions from inspectors in the field, and public remarks—about what they expect to see when AI is used. The expectations are grounded in existing standards and longstanding inspection focus areas: audit evidence, supervision and review, professional skepticism, and firm quality control (now quality management). In other words, AI does not create a “new” audit; it amplifies the need to show your work. Firms that treat AI as a “shortcut”, rely on outputs that cannot be explained or reproduced, or fail to govern and document how tools were selected, configured, and monitored are inviting new risks to support their audit conclusions. Conversely, firms that can clearly articulate the purpose of the tool, how it aligns to audit objectives, how inputs and outputs were validated, and how experienced personnel supervised and challenged the results will be far better positioned during inspection.

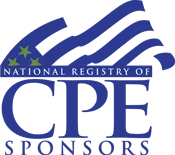

The table below summarizes what inspectors typically expect to see documented when AI is used in a public company audit. Firms can use these themes to evaluate whether their engagement documentation tells a complete story that an experienced auditor (and an inspector) can follow from objective, to procedure, to results, to conclusion.

JGA Key Takeaways :

The PCAOB has been clear: today there is no separate “AI standard”—and no relaxation of expectations. Existing auditing standards already apply, particularly those governing audit evidence, and inspectors are using them to assess AI enabled audits. This is the way we see it:

- As a foundational principle, humans remain accountable for the audit. AI may assist, accelerate, or enhance procedures—but responsibility for conclusions rests squarely with the engagement team and engagement partner.

- AI should be governed much like other critical audit infrastructure—embedded within the firm’s system of quality management rather than operating on the margins. Firms that embed AI within strong quality management, supervision, and documentation practices will be far better positioned to withstand inspection scrutiny.

- Merely retaining AI outputs is not enough. Engagement teams must show how they challenged the results and why they found them reasonable. The engagement file must clearly show how the auditor evaluated and corroborated those results. AI outputs that cannot be explained or reproduced are unlikely to withstand inspection scrutiny. Demonstrating reliable inputs and validation of outputs is critical here.

- Work performed with AI is subject to the same supervision and review expectations as traditional audit work. We expect inspection issues to arise when engagement files include AI outputs but little evidence of reviewer challenge or follow through.

- Inspection risk arises when documentation suggests reliance on AI without evidence of human evaluation, challenge, and decision making.

Final Thoughts

If a regulator cannot see the work, the judgment, or the evaluation in the file, they will likely conclude the work did not occur, even if it did. This is why documentation is not an administrative afterthought—it is the proof of execution, skepticism, and compliance under auditing standards. Solid documentation is especially critical with the speed of evolving AI and how firms utilize these technologies in conducting audits. Key considerations include clearly documenting why an AI tool was used, which audit objective or assertion it supports, what data inputs and assumptions were used, and what outputs were generated and relied upon. Just as important, engagement files must show how auditors validated, evaluated, challenged, and corroborated AI outputs, including evidence of professional skepticism, supervision, and partner involvement. AI does not create audit evidence on its own—auditors do—and inspectors will expect documentation that makes AI assisted work transparent, explainable, and defensible. In practice, this requires firms to address AI risks both firm‑wide—through governance, validation, and monitoring—and within each audit engagement—through careful risk assessment, evaluation of AI‑enabled audit evidence, and inspection‑ready documentation.

Ultimately, AI in auditing is a complex quality issue that cuts across firm-wide quality management and individual audit engagements. Its effective use depends on strong firm‑wide governance and equally rigorous engagement‑level judgment, making it a critical area of focus for firms committed to sustaining audit quality. Contact your JGA audit quality expert today to schedule a consultation to assess if your firm’s reliance on AI is inspection-ready.

At Johnson Global Advisory , we support firms in selecting, implementing, and optimizing these tools to meet their unique needs. For more insights, visit our blog or contact us to learn how we can help your firm AmplifyQuality®.

For more information, please contact your JGA audit quality expert .

Joe Lynch, JGA Shareholder, to Provide Insights on Changes to Engagement Quality Review Requirements